Hi everyone. This is R-Hack editorial office.

The days have gotten a lot longer.

When I wake up in the morning and open the curtain, the sun is bright and I feel that summer is near.

Commerce Tech recently held a Tech event for QA. The event started last year and this was the second time. and it was held online.

A total of 132 people signed up, with 114 concurrent connections and 20 questions during sessions.

Thank you.

Theme

The theme is "Utilizing STLC data for whole SDLC" The various data generated on our software lifecycle STLC. also, we'd like to utilize this STLC data for whole SDLC. so, we told about the following

1.Case study of how data is analyzed and used to improve processes

2.Using a snapshot of data to improve the efficiency of automation testing

3.Case study of using data for test management and reporting

4.Case study of using the resultant data of automation for automated execution

5.How the above data is created and managed

I hope you enjoy it!

Now let me introduce the presentation materials and the questions we received in turn.

Session#1:

[Case study] Utilize STLC data for Process Improvement by Rajat Dayma

Q1; Can you tell us about QA's misconceptions (INVALID), with specific examples?

A1:Spec misunderstanding is purely based on QA did not understand by QA. For example, QA is assuming that the specification says red button, but he misread it and understood it to be a blue button and mistakenly detected it as a bug.

Q2:How many testing environments are there in your project?

A2:we have multiple environments for each project. and if it is ok release it to prod.

Q3 :Do you analyze the data differently for each team? Or are you analyzing it as a whole Rakuten?

A3: yes we analyse data based on project for SQA service. also, we analyze it project based. not whole Rakuten

Q4 Is there any entry and exit criteria for testing?

A4: Yes, based on STLC and based on execution of R1 and R2 execution results.

for example, if we didn't find any incident in R1 we don't do R2.

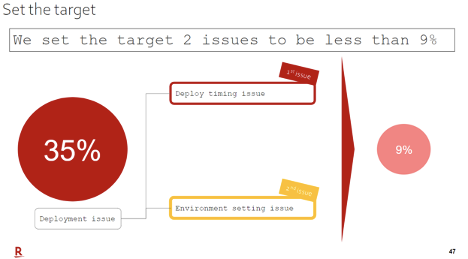

Q5: Could you show me page 47? How do you set your goals?

A5:Logically, there were two root causes.

36% = 18%+18%.

And we are 100% confident that one of them will be eliminated.

So, it will be from 18% to 0%.

But the other one we are not confident in, so based on our experience, we've set the goal at half that It was.

18% -> 9%

So 9% = 0% + 9% is the answer.

but after implementing solutions we were achieve to 2.38%

Session#2: Snapshot Regression Test by Satoru Awasawa

Q1: Usually we're expecting one or two manual tests.Automation tests must assume multiple test runs right?Are there any cases where you have to create additional data for automation testing?

A1: When focusing only this new automation framework, we don't need to prepare data other than snapshot data.

Q2: snapshot still valid for a response that is time-dependent,?for example a test data that changes in the morning or afternoon.

A2: Basically no problem, but if application use the server time or variable you need to consider replacement.

Q3: What unit of the test is being run?Once a day.Per project,Per pull request, etc.

A3: Whenever source is pushed to branch, these tasks will be kicked as follows;

1:Build

2:UnitTest(Front/API)

3:Deploy

4:IntegrationTest(SnapShotRegressionTest)

Q4: Do you support the native app?

A4: Sure

Q5: For a data set as large as Rakuten Travel, the data set would be quite large. but how long would it take to take and restore a Snapshot?

A5: It takes around 15 mins by managing test data. We want to shorten this to less than 1 min. Please consider it with us!

Q6: Is there a difference in assertions when the response includes auto-numbering or system dates?

A6: Of course, we need to find its replacement either application side or automation framework.You know, there is no impossible things.

Q7: Do you plan to publish framework as OSS?

A7: There are some problems, we want you to help it with us!

Q8: How are you going to maintain that data with data changes from Snapshot? (e.g., columns are added)

A8: Retrieving data. Wait a few seconds and try to cut or copy again.

Session#3: Marketplace QA Introduction by Toshinori Serata

Q1: I understood that there's a problem with the perception of wording, did you have any ideas to solve that? for example that it should be aligned with ISTQB terminology or something.

A1: Follow QA Standard as basic. we customized to avoid misunderstanding for the development members who are not familiar for QA standard

Q2: How do you separate your development and QA task?

A2: Currently Dev check from Class/Method level point of view. QA side check from shopper/merchant point of view. But there is gray zone like as design spec/system in V model. we plan to sort out this part based on the product line activity for each function.

Q3: What can be the goal for the line between development and QA? Release speed? Cost? Quality improvement?

A3: Related for all. Since this activity is re-consider the QCD balance, step is existing. I feel current balance is prioritized delivery, improve the cost to reduce wasted / duplicate matter at first and then find actual QA point and Cross-functional matter to improve quality.

Q4: You are talking about for Rakuten Ichiba 25 Rakuten employees and 25 partner staffs? right? If so how do you distinguish of them?

A4: Not Ichiba team for all.For Marketplace QA, 3 FTE w/ larger PS members. PS members mainly work for Bridge work w/ test execution team. in order to proceed test management improvement activity need more FTE.

Q5: Is this team handling both the Japanese market and the global market?

A5: Japan marketplace specific and Japan/Global Common matter is covered. Global specific matter covered by different team in same section.

LT#1: SQA Best Practices: A Practical Example by Rosana Sanchis

LT#2: Improve test automation operation by Sadaaki Emura

Q1: Is this re-testing considering if this is a rerunable test or not?

A1: we are re-executing them without any decision.

Basically, the script is built on the assumption that it can be rerun repeatedly and include mechanism to meet those requirements.

Of course, there are some things that cannot be done.

so we don't support them now.

This is because cost reduction is not significant for them.

Q2: This is a common problem with test automation, such as HTTP 500 errors. Didn't you try to improve the test environment itself?

A2: As it was explained in the organization description, the development and QA organizations are independent of each other.

The testing environment is responded by development team.

Naturally, we asked requests for development, and development is handled.

However, basically QA doesn't take the initiative for that

See you all for the third conference!